This is How You Can Use Kubernetes

Kubernetes Logo

Kubernetes Logo

Introduction

In the beginning, there were single servers, servers on which operating systems ran. They enabled the use of expensive machines by a lot of people using multi-user and multi-task operating systems.

But there were cases in which an application had special needs in terms of libraries or application versions, and therefore it was not possible to run it on the main server. For such occasions, completely new servers needed to be installed, which was expensive, so virtual machines were the solution. Multiple guest operating systems could be run on a host, one where a hypervisor manages physical resources and share them between the guests.

This was a great improvement, but there was still a problem: each virtual machine needed to run its own kernel, drivers and libraries, wasting CPU and memory resources. Many different attempts were made to solve this issue, and during Ycombinator Summer 2010, Docker was presented as a solution to tackle the problem. With Docker, each “virtual machine” was going to share the kernel with the host operating system. This way, you may have less isolation but more efficiency.

But there are still issues to address—one of them is automation. Kubernetes is one of the available tools that can be used to automate the administration of a large number of servers by a small team of system administrators.

What is Kubernetes?

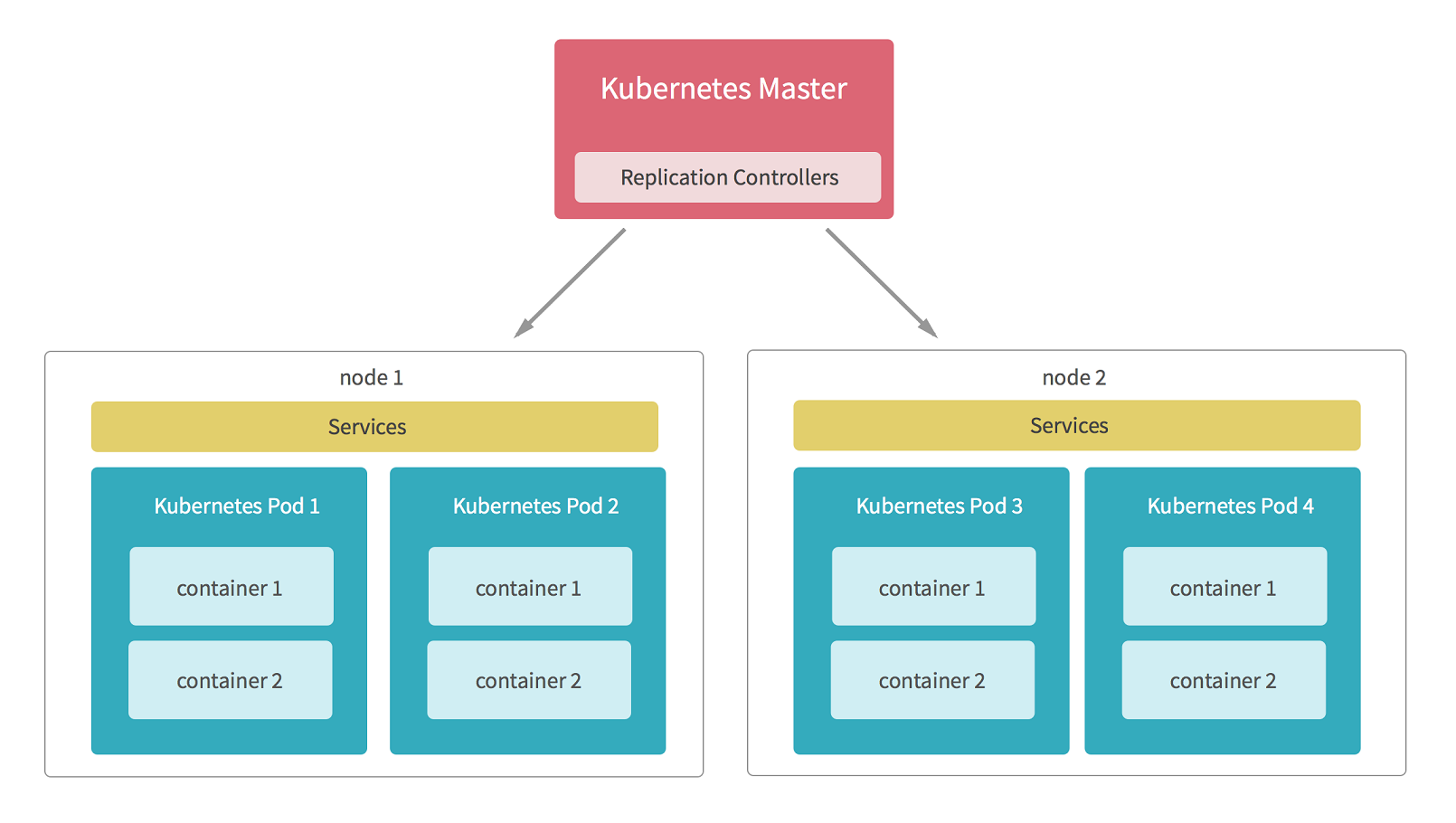

Kubernetes is an orchestrator that takes care of different administrative tasks to manage containerized servers or services. It was released in 2015 and was initially developed by Google. Now, Kubernetes container orchestration is widely known and used by developers.

When to use Kubernetes

From all that has been said till now, it is clear that Kubernetes helps in the following ways:

Improve availability of your application: Using Kubernetes to manage the number of containers in which your application runs and to remove extra containers once they become redundant, you can be assured that the final customer will always find the service available. The computing power will shrink and expand as needed, making the application traffic proof.

Make your deployment as economic as possible: Running your application on the cloud is usually the best option. But, how many resources do you need to allocate and therefore pay for? If you assign too few resources, the service will become unavailable every time the traffic spikes. On the contrary, if you assign too many resources, you will spend too much money waiting for that spike in traffic.

Automatic scale: Through scaling, I mean automatic scaling as per a given set of rules. Kubernetes will automatically create and destroy new Pods, adding or subtracting computing power of a running application.

Automatic upgrades: If you have hundreds or thousands of nodes and there is a new security patch available for the software you are running on them, it can be a hassle to upgrade all of them. Also if done by hand, this is a task prone to errors. With Kubernetes, you can manage server or library upgrades automatically. With automatic upgrades, you can ensure that:

- security patches are applied effortlessly and promptly, and

- the task is accomplished easily.

Optimize the use of expensive resources: If you are working on barebone servers, you want to assign only the exact amount of memory or disk to each container to make your solution as cost-effective as possible. Kubernetes can do that either by letting you know when a POD can be reduced in size or by reducing it automatically. You can define upper and lower limits and let Kubernetes operate between them.

Make your application hosting agnostic: When you are developing an application, you want it to be able to run it in any environment. That way you can migrate from one provider to another easily. By using containerized solutions and making and deployment automatic, you can be sure that your application will run on any cloud provider, thus giving you the freedom to choose the provider that offers you the best cost/benefit.

So, those are some scenarios where Kubernetes needs to be used: when you need to be sure the service will be available no matter the traffic and when you need to stay on a budget while keeping the application up and running. The way Kubernetes manage the resources in a flexible way makes these two targets achievable.

Conditions

To be able to use Kubernetes as discussed in the cases above, you need to create your solution in a way that it can be containerized, with different services running in different containers. The more atomic your application is, the more Kubernetes can help you.

Remember that Kubernetes is all about automation of tasks. It will not solve all your problems. You still need to develop an application that efficiently uses the resources, otherwise, you will end up wasting money. Kubernetes is another tool for you, not the only one.